You know that moment when an entire industry's business model gets flipped on its head? We're living through one right now. Sam Altman just compared AI to electricity and water — and he's not being poetic. He's describing a fundamental rewiring of how software gets sold, delivered, and paid for. The implications? Massive. And most businesses aren't remotely ready for what's coming.

You know that moment when an entire industry's business model gets flipped on its head? We're living through one right now. Sam Altman just compared AI to electricity and water — and he's not being poetic. He's describin

You know that moment when an entire industry's business model gets flipped on its head? We're living through one right now. Sam Altman just compared AI to electricity and water — and he's not being poetic. He's describing a fundamental rewiring of how software gets sold, delivered, and paid for. The implications? Massive. And most businesses aren't remotely ready for what's coming.

The End of Software as We Know It

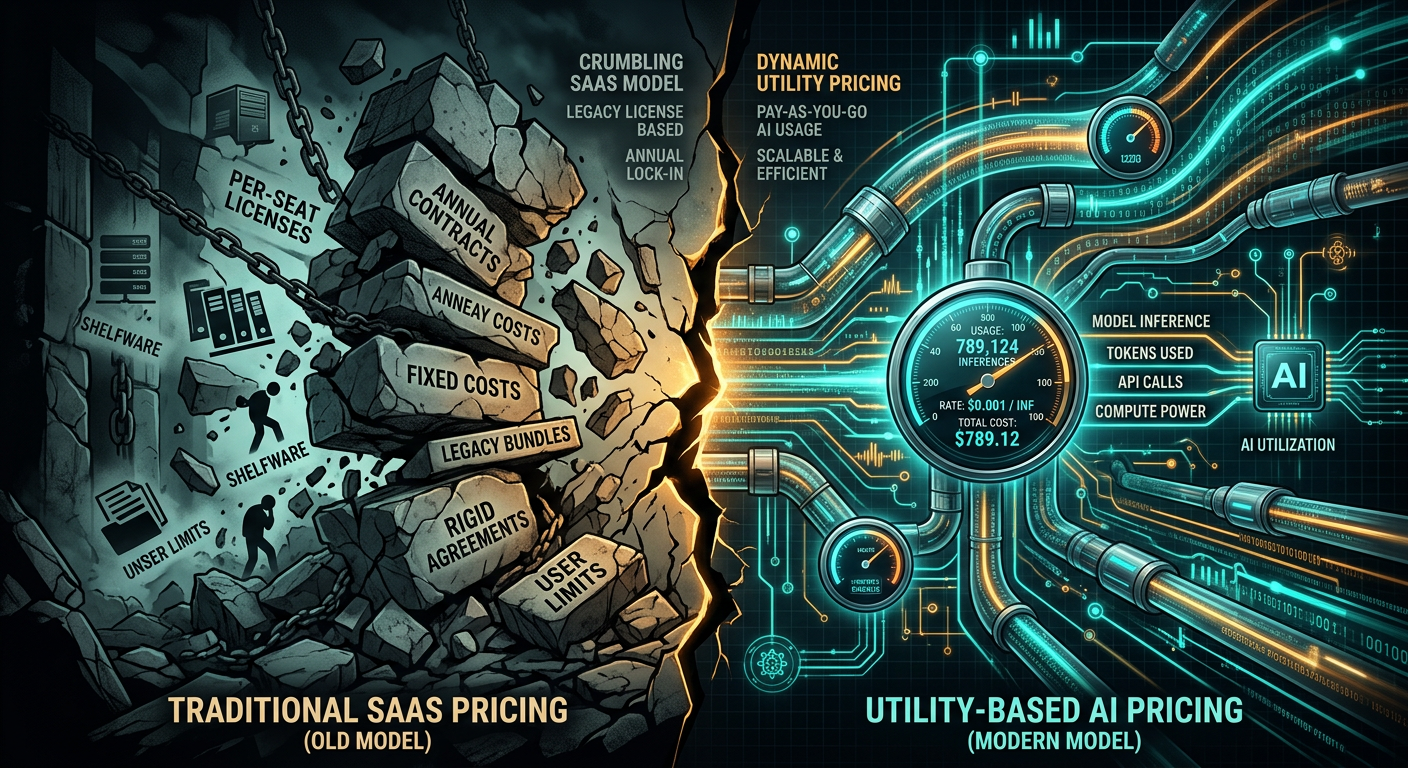

Here's the deal: The SaaS model that's dominated B2B software for the past two decades is dying. Not evolving. Not transforming. Dying.

In March 2026, OpenAI's CEO didn't mince words. AI will be sold "like electricity and water" — consumed on demand, billed by usage, delivered as a utility. No more $50K annual contracts. No more per-seat licensing. Just pure consumption-based pricing where you pay for the intelligence you use, measured in computational tokens.

This isn't some distant future scenario. It's happening now. Microsoft's Azure OpenAI Service? Consumption-based. Anthropic's Claude API? Pay per token. Google's Vertex AI? Usage pricing all the way down.

The enterprise world is already coining a term for this shift: Intelligence as a Service (IaaS). And before you groan about another acronym, understand this — IaaS represents a complete philosophical break from traditional software. We're not buying tools anymore. We're buying outcomes. Decisions. Actions. Intelligence itself.

The Great Business Model Migration

From SaaS to AIaaS: What Actually Changes

Let me paint you a picture of how radical this shift really is.

Traditional SaaS: You pay Salesforce $150 per user per month. Whether your sales rep uses it for 2 hours or 200 hours, the cost stays flat. Predictable? Yes. Efficient? Hell no.

AIaaS utility model: You pay for actual intelligence consumed. Your AI analyzes 10,000 customer support tickets? That's X tokens. It writes 500 personalized emails? That's Y tokens. Slow month? Your bill drops. Busy season? It scales up.

Snowflake pioneered this model for data warehousing, and their stock price tells you everything about how markets value consumption-based pricing. They went from zero to $60 billion by letting customers pay for what they actually use.

But here's where it gets interesting for AI. Unlike data storage, intelligence consumption is wildly variable. One complex reasoning task might consume 100x the tokens of a simple query. Suddenly, your IT budget becomes as unpredictable as your electricity bill during a heat wave.

Financial Implications: CFOs, Pay Attention

This shift fundamentally breaks how enterprises budget for technology:

Cash flow chaos: Fixed monthly SaaS costs? Gone. Your AI spend now fluctuates with usage patterns, seasonal demands, and business cycles. That predictable opex line item just became a variable cost nightmare.

ROI calculations implode: How do you calculate return on investment when the investment itself is variable? Traditional software ROI assumed fixed costs against growing benefits. With utility pricing, both sides of the equation become moving targets.

Procurement gets complicated: Your purchasing department can't negotiate a flat enterprise discount anymore. They need to understand token economics, usage patterns, and consumption forecasts. It's like negotiating an electricity contract — you better understand your load profile.

I talked to a Fortune 500 CFO last week who put it bluntly: "We've spent two decades moving from capex to opex with SaaS. Now we're moving from fixed opex to variable opex with AI. My entire budgeting process is broken."

The Infrastructure Gold Rush: Following the Money

The Great Infrastructure Rotation

March 2026 marked a violent shift in where smart money is flowing. Venture capitalists and public markets are abandoning "AI wrapper" companies faster than rats fleeing a sinking ship.

The numbers don't lie:

- CoreWeave raised $7.5 billion for AI infrastructure in 2024

- Lambda Labs secured $1.5 billion for GPU cloud services

- Crusoe Energy raised $500 million to build AI data centers powered by wasted natural gas

Meanwhile, AI application companies are struggling to raise at half their previous valuations. Why? Because investors finally realized that without owning the infrastructure layer, you're just a tenant in someone else's building.

New Monopolies in the Making

Think about how utilities work. You don't have 50 water companies running pipes to your house. Natural monopolies form around infrastructure because the economics demand it.

The same thing is happening with AI infrastructure:

Data center operators are the new utilities. Companies like Digital Realty and Equinix aren't just landlords anymore — they're becoming the ConEd and PG&E of the AI age. Control the physical layer, control the market.

Custom silicon is the new oil refinery. NVIDIA's H100 chips aren't just components — they're the refineries that turn raw electricity into refined intelligence. And just like oil refineries, whoever owns them prints money.

Interconnection is everything. The companies controlling the fiber optic cables and network interconnections between AI data centers? They're building the toll roads of the intelligence economy. And every token that flows pays the toll.

The Physics Problem: When Digital Meets Physical

Power as the Ultimate Bottleneck

Here's the uncomfortable truth nobody wants to discuss: AI doesn't scale like software. It scales like heavy industry.

A single state-of-the-art AI data center now demands 100+ megawatts of continuous power. That's not a server farm — that's a steel mill. For context, 100 megawatts powers about 80,000 homes. Every new AI cluster is essentially a small city's worth of electricity demand, concentrated in a single building.

The math gets worse. By 2035, U.S. data center electricity demand will triple. But here's the kicker — the U.S. grid adds about 1% capacity per year. See the problem? We're trying to pour an ocean through a garden hose.

This isn't a technology problem you can code your way out of. It's physics. And physics always wins.

Geographic Constraints: The New AI Geography

Forget about locating your data center based on tax incentives or talent pools. In the AI utility era, you go where the power is.

Northern Virginia used to dominate data centers because of proximity to internet exchanges. Now? Texas, Iowa, and North Dakota are the new hotspots. Why? Abundant power, friendly regulators, and communities desperate for tax revenue.

But even these locations are hitting limits. Dominion Energy in Virginia recently announced a moratorium on new data center connections above 100MW. The grid simply can't handle more.

This creates a fascinating dynamic. Geographic regions with excess power generation become the new centers of AI compute. Suddenly, a wind farm in West Texas or a nuclear plant in Illinois becomes strategic AI infrastructure.

We're watching the emergence of "AI industrial zones" — geographic clusters where massive power availability enables concentrated intelligence production. It's the 21st century version of locating steel mills near coal mines.

The Energy Economics of Intelligence

Tech Giants as Energy Companies

The biggest tell that we've entered a new era? Microsoft, Google, and Amazon are becoming energy companies.

Microsoft just signed a 20-year deal to restart Three Mile Island's nuclear reactor. Yes, that Three Mile Island. They need 835 megawatts of carbon-free power, and they're willing to fund a nuclear plant to get it.

Amazon bought a 960-megawatt data center campus directly connected to a nuclear power station in Pennsylvania. They're cutting out the middleman — no grid, no transmission losses, just direct nuclear-to-AI power.

Google? They're signing Power Purchase Agreements (PPAs) for gigawatts of renewable energy, essentially becoming one of the world's largest energy buyers. Their 2025 energy procurement exceeded many small countries.

These aren't sustainability plays. This is about securing the raw material of the AI age — electricity. When intelligence becomes a utility, power becomes your supply chain.

Community and Regulatory Challenges

Here's where things get politically spicy. When a single AI data center can spike local electricity rates, communities start asking hard questions.

Microsoft learned this the hard way in Iowa. Their proposed AI facility would have consumed 15% of the local utility's capacity. Residents freaked out about their power bills. Microsoft had to launch a "Community-First AI Infrastructure" program, essentially paying off locals with job training and infrastructure investments.

The regulatory framework is scrambling to catch up. States are implementing "AI load reviews" for facilities over 50MW. Environmental groups are challenging permits based on grid stability concerns. NIMBYism isn't just for housing anymore — it's coming for AI infrastructure.

This creates a fascinating paradox. AI companies need massive amounts of power, but the communities that host them bear the infrastructure burden. It's the classic tragedy of the commons, except the common resource is the power grid itself.

Strategic Implications for Business Leaders

What This Means for Your AI Strategy

Alright, let's get practical. You're running a business, not a utility company. How do you navigate this shift?

First, throw out your old IT budgeting models. Consumption-based AI pricing means your technology costs now scale with your business activity. Busy season? Higher AI bills. Slow quarter? Costs drop. Your financial planning needs to account for this variability.

Second, infrastructure dependencies matter more than features. When evaluating AI vendors, don't just look at capabilities. Ask about their infrastructure stack. Who provides their compute? What's their power redundancy? A great AI model running on shaky infrastructure is worthless when you need it most.

Third, consider hybrid strategies. You don't need to go full utility immediately. Many enterprises are running hybrid models — baseline AI capabilities on fixed-price contracts, with utility pricing for peak loads. It's like having both a power generator and a grid connection.

Fourth, location suddenly matters for digital services. If your AI workloads are significant, proximity to major AI data centers reduces latency and costs. We're seeing companies relocate technical operations to be closer to AI compute clusters. The cloud made location irrelevant — AI is making it critical again.

Preparing for the Transition

Here's your tactical playbook for the next 18 months:

Audit your AI consumption patterns. Before you can optimize for utility pricing, you need to understand your usage. Track token consumption across different use cases. Build load profiles. Understand your peaks and valleys.

Renegotiate vendor contracts with flexibility in mind. Lock in hybrid pricing models that let you benefit from both predictable baseline costs and variable scaling. Push for transparent token pricing. Demand usage analytics.

Build internal intelligence budgets. Just like departments have cloud budgets, they need AI token budgets. Make consumption visible. Create incentives for efficient intelligence usage. Waste in a utility model directly hits your bottom line.

Invest in AI efficiency. In a consumption model, efficiency equals money. Techniques like prompt optimization, caching, and edge inference become critical cost controls. A 20% reduction in token usage is a 20% cost savings.

Plan for infrastructure constraints. If your AI strategy depends on massive scale, start thinking about infrastructure availability now. Where will your compute run? What happens if regional capacity is constrained? Build optionality into your plans.

The New Rules of the Game

We're witnessing the birth of a new economic model for intelligence itself. The shift from SaaS to utility pricing isn't just a billing change — it's a fundamental rewiring of how businesses create, capture, and consume value from AI.

The winners in this new world won't necessarily be those with the best algorithms. They'll be those who understand the physics and economics of intelligence delivery. Those who secure infrastructure access. Those who optimize consumption. Those who think like utility operators, not software vendors.

For business leaders, the message is clear: Your AI strategy can no longer be divorced from infrastructure reality. The age of abstract digital transformation is over. We're entering the era of physical digital infrastructure, where bits meet atoms and intelligence flows like electricity.

The companies that understand this shift — and act on it now — will have sustainable competitive advantages. Those that don't? They'll be stuck paying whatever the utility companies demand, struggling with unpredictable costs and constrained access.

The future of AI isn't just about what intelligence can do. It's about who controls the pipes it flows through. And right now, those pipes are being built by companies thinking in gigawatts, not gigabytes.

Welcome to the utility era of AI. Better start thinking like a power company.

Ready to build AI Agents that actually work?

Human-in-the-middle AI Agent teams that perform real work. Coaching, rapid prototyping, and strategy with no fluff.

Book a Free Intro Call →Dispatch

Get the next field note

New articles, show notes, and practical lessons from the Strattegys AI crew.

Agent Development

Agent Development AI Growth Consulting

AI Growth Consulting